There is a common perspective on the practice of education, intended as a criticism, I think, that proposes if a visitor from the past were to be time-traveled to the present, he or she would be amazed, but bewildered by so many areas of civilization (travel, medicine, farming), but feel completely at home in K12 or university classrooms. As an educational researcher, I admit this claim has always troubled me. Was the process of passing on knowledge and developing important skills optimized centuries ago despite all of the folks like me who study how people learn and how the processes supporting learning might be improved? If I disagreed, what would I identify as a counterexample, or how, at the very least, would I justify the time, effort, and resources people like me have invested in changing the status quo?

My Interest in Individualization

A general topic that has long been at the core of my personal research interests has been individualization. It seems obvious that learners differ on important variables that impact learning. Some have greater aptitude than others. Some, due to an endless list of differences in life experiences, at a given point in time have significant gaps in relevant background knowledge and prerequisite skills. For economic and historical reasons, our approach to assisting student learning largely ignores these differences. Our system, despite claims, fails to actually meet students where they are to most efficiently move them forward. Where students are also ignores differences in goals, interests, and whatever else might come under the general heading of motivation.

Those who have followed my posts over the years will likely recognize that much of what I have done has focused on evaluating approaches that make use of technology to expand the flexibility educational systems can practically offer. I will identify two such topics for those who might want to explore my past posts –mastery learning andtechnology-supported tutoring. I admit that these seemingly logical opportunities have not yet yielded the benefits in application I had hoped, and this is the topic I want to examine in this post.

Research in the social sciences which would include applied research in education (e.g., classroom learning) has notorious weaknesses, but unique challenges. For example, recent criticism offered to the public notes the high rate at which published research cannot be replicated. We seem in an era in which funding for science in general has been questioned, so with cutbacks those of us working in more challenging areas have reason to be concerned. Yes, I said more challenging. I agree the “basic sciences” are of great importance and deserve support, but think of the claim I used to offer when I taught the research section of Introduction to Psychology – the chemicals in the test tube, the electrons in the circuit, or the planets in space don’t think about how they feel like reacting today. The rules that explain such behaviors may be intricate and difficult to ascertain, but at least most are reliable. The challenges social scientists face are simply different, but the general trend has been toward greater understanding.

Back to the thought experiment about the visitor from the past

If one assumes progress should happen when it either hasn’t or at least not to the degree that seems reasonable, is there reason for optimism? Are optimists delusional? What are optimists up against when it comes to criticism of present practices and seeking funding and attention for new approaches?

Changing a massive system with highly ingrained beliefs and behaviors is tremendously difficult. New ideas struggle to take hold and mature within this environment. An “intellectual pessimism” used to resist deep exploration of theoretically logical and basic research justified changes I have decided to describe as the “you are doing it wrong” plea for continued experimentation. I don’t think you can search for additional references to this phrase expecting a lot of success, but it is a phrase I have decided captures the attitude I think typifies the resistance others have identified.

The phrase implies criticism of researchers who insulate themselves from scrutiny of their “big ideas” by attributing poor outcomes to implementation failures rather than to flaws in the ideas themselves. In other words, why do “big ideas” continue to resurface repeatedly over time when attempts to apply these ideas have not previously been successful. Perhaps most simplistic put, it is about excuses.

The “you are doing it wrong” explanation works like this. When a widely adopted educational innovation – learning styles, discovery learning, whole language reading, AI tutoring, open classrooms, etc. produces disappointing or mixed results, proponents rarely concede the theory is wrong. Instead they argue: the idea is sound, but practitioners didn’t execute it faithfully or well enough. The failure belongs to the implementers, not the framework.

This rationale functions as an unfalsifiable escape hatch. Any negative evidence gets reframed as a measurement of implementation quality rather than a test of the underlying idea. The theory can never lose, because every failure is a fidelity problem.

In education, common variants are typified by the following:

“Teachers didn’t receive adequate training” – used with constructivism, project-based learning, differentiated instruction, AI

“It wasn’t implemented with fidelity” – the research or theoretical components were not followed with sufficient care.

“The conditions weren’t right” – class sizes, demographics, resources, culture

“It was a watered-down version” – the pure form was never really tried

With these excuses, it is the grain of truth that makes it plausible. Of course, there is always the possibility that the excuse is valid. This pattern is worth naming clearly in writing about learning science, because it explains a lot about why education cycles through fashions without accumulating settled knowledge the way other applied fields do.

Are classroom uses of AI the most recent examples of “you are doing it wrong”?

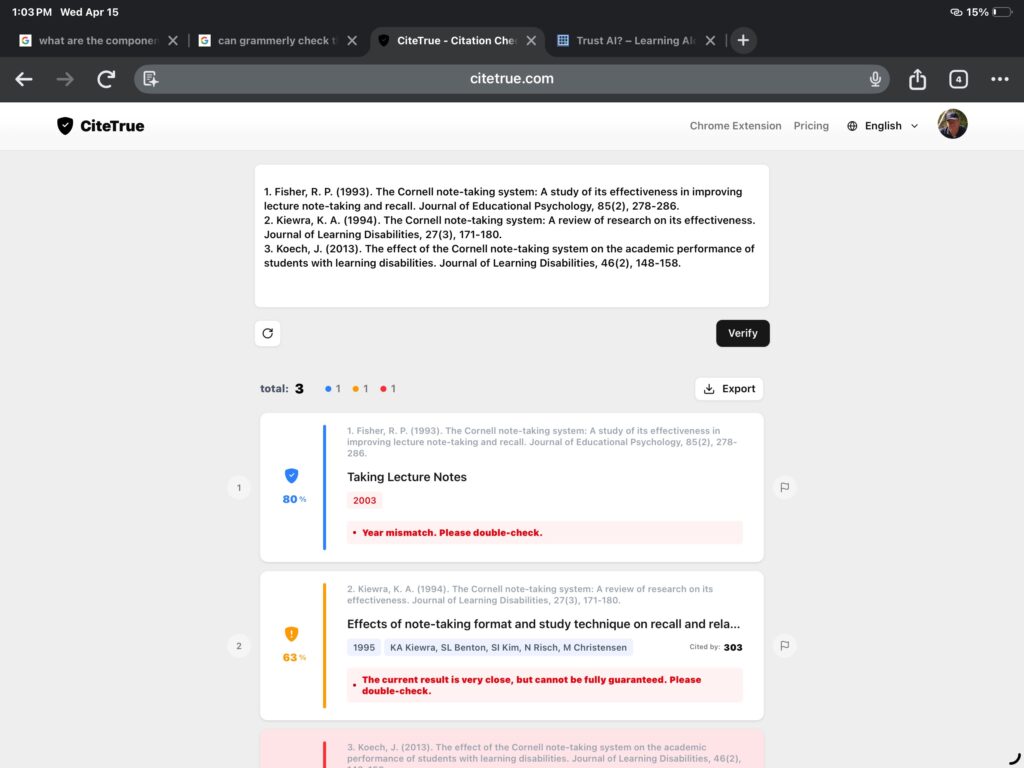

AI applications in classrooms represent recent examples of promising innovations, but also potentially of an impotent fad (e.g., Gerlich). Claims of cheating instead of learning abound and while theory and carefully controlled research point to logical and demonstrated benefits it would seem fair to argue educators are concerned about most student use.

Recently, I have encountered multiple accounts that propose “you are doing it wrong”. I intend to develop an extended analysis of the core ideas of these claims in a future more analytical post, but I might quickly summarize here by explaining that true success in the use of AI is most likely when certain conditions of student motivation, metacognitive proficiency, and working memory issues are met. In many cases, these variables are not functioning at desirable levels.

Several writers have backed a three-level AI use model proposing that some levels (the lowest and the highest) are likely to be successful and the middle level, which is presently most common, is less likely to produce satisfactory results.

The three levels have the following characteristics:

Zone 1: No AI Involvement

In this level, learning occurs without any AI assistance. While learning happens, it is often “capacity-constrained” because the learner must spend significant time and effort on execution and task completion, leaving less bandwidth for higher-order reflection.

Zone 2: Scattered, Half-Hearted Use

This is characterized by using AI for minor tasks like fixing sentences, checking facts, or tidying paragraphs. It often produces the worst learning outcomes. The learner still carries nearly the full cognitive load but adds the overhead of managing AI interactions without gaining significant cognitive savings. Note: this summary paraphrases the description of the authors. My version would add having the student using the AI tool to perform the task based on simplistic instructions.

Zone 3: Committed, Strategic Delegation

This level involves offloading entire categories of substantive work to AI to free up genuine cognitive capacity. This freed bandwidth is then redirected toward tasks AI cannot do, such as critiquing frameworks, questioning assumptions, and making complex judgment calls. This zone is where “transformative learning” is thought to live, provided the course design is intentional about how and why tasks are delegated.

My suggest for making sense of these differences is to take a familiar task and work through what these different zones might look like in practice. I think learning to write makes a good case.

In this example, Zone one is easy – no use of AI. Zone two might include simply asking the AI tool to complete an assignment for you or perhaps using a tool such as Grammarly to check spelling and grammar. What would Zone three look like? Perhaps you might use an AI tool to suggest a list of topics you might address based on your general goal or perhaps create a draft and then ask the AI for a critic based on concerns you have about your initial effort.

Summary

I hope it is obvious how “you are not doing it right” would apply to how educators may allow students to use AI. The challenge then to evaluate whether such uses are an example of a typical educational fad or actually are limited because the learner is not doing it right.

Source

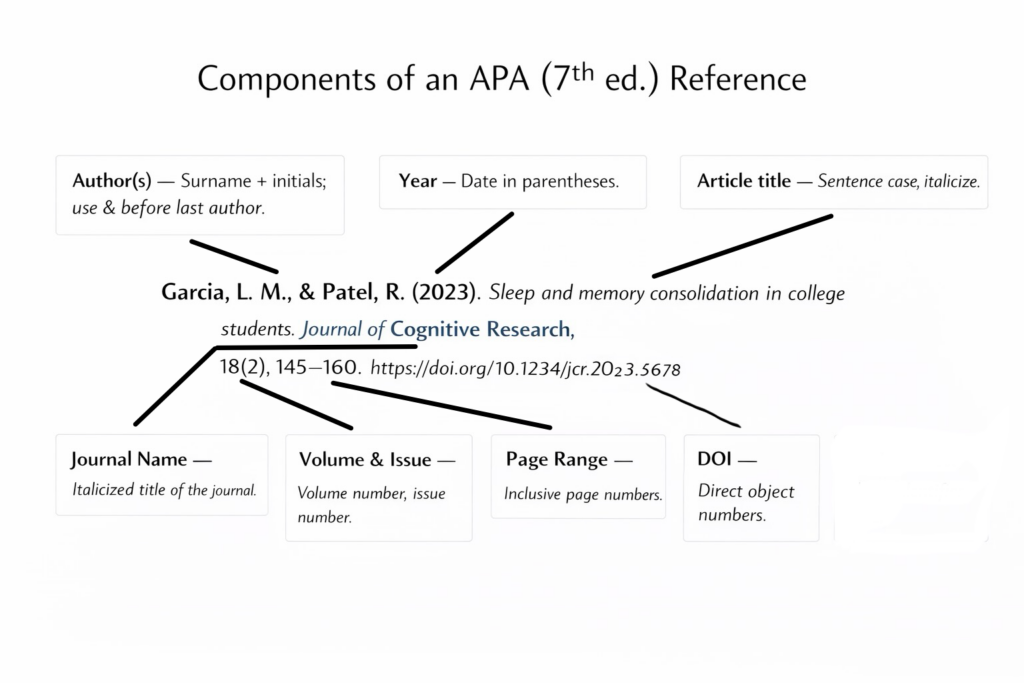

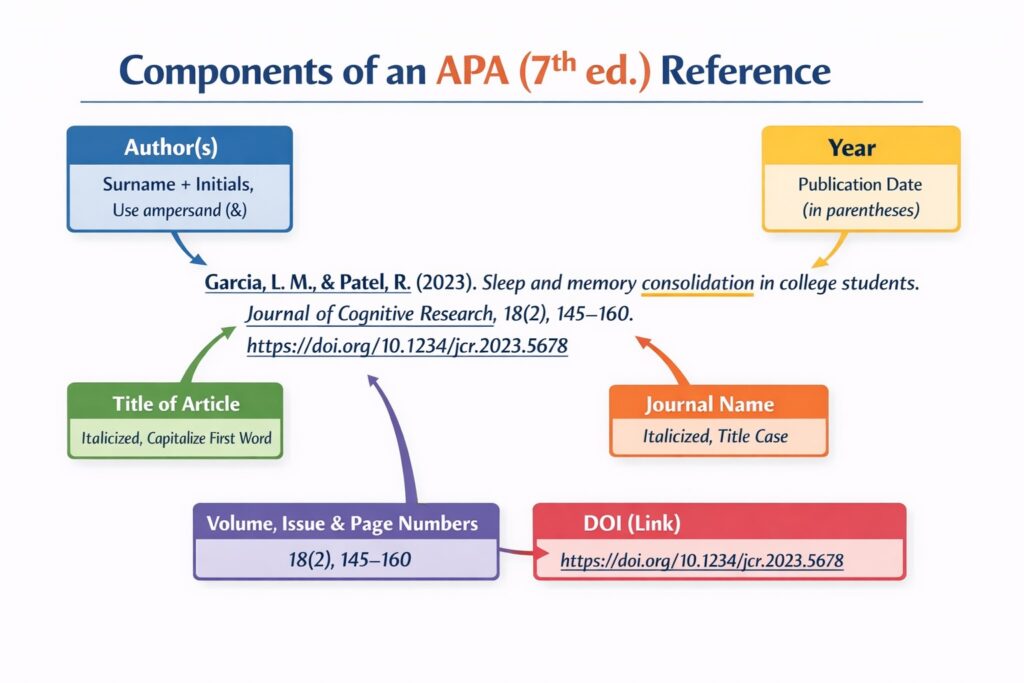

Gerlich M. (2025). AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking. Societies. 15(1), https://doi.org/10.3390/soc15010006

![]()

You must be logged in to post a comment.