Surveillance capitalism, a term popularized by economist Sashona Zuboff, describes the collection of information about people for the purpose of economic gain. Zuboff’s work focused on surveillance capitalism as it was practiced by social media companies paying particular attention to a) user lack of understanding that information about their online behavior was being collected and integrated to the extent that was the case and b) the effort of companies to provide online services in such a way that users would be more committed to the service and provide more behavioral data in the process.

I have no training as an economist and I likely misunderstand many of Zuboff’s interrelated arguments involving economics, the psychology of confirmation bias and behavior modification, legal issues associated with informed consent, and factors such as the “network effect”. I do have some experience offering online content complete with ads and I have some experience applying both ad blockers and coding that enables the identification of ad blockers. I also have some experience making use of online services that are attempting to implement other approaches to collecting and sharing revenue for online services.

I would describe the question I am attempting to answer as this – Can capitalism offer an effective alternative to surveillance capitalism or will the greed of some online companies eventually lead to government intervention? I suppose the answer to the question could be other than the two options I identify, but I am betting things will go one way or the other.

The use of an online social media service involves the interaction of 3 and perhaps 4 parties: you as the user, the content creator, the service (e.g., Google, Facebook, Blogger), and possibly the company offering an ad (actually a combination of the company advertising and the ad delivery service). Each party has costs and benefits in the interaction of these agents. In the most common present model, a user makes the attempt to use a service – e.g., read a blog post on Blogger. The user reads content on this service for the cost of providing personal information collected by the service (Google). This is the cost to the user. The service (Blogger a service of Google) has the potential of receiving revenue for the cost of providing the infrastructure through the collection of personal information and possibly through ad revenue generated from clicks of ads. The ad company the infrastructure company for ads clicked is compensated by those who want ads to be viewed. The content creator is compensated for their work (their cost) in creating content when an ad associated with their content is clicked. This is a balanced system or at least it is proposed to be so.

Consider what happens to the present system when one of the parties involved finds a condition as it exists unfair or devious. This would be the case when users (viewers) of content within this system object to surveillance capitalism because they find the cost to them of revealing their personal information out of balance with what they receive. They might decide to deploy an ad and cookie blocker to reduce the personal information that is revealed. This does prevent the infrastructure provider from receiving the benefit of the information harvested and any funds provided from ad clicks. This means the infrastructure company is providing a free service and still must pay the costs of providing the infrastructure. Likewise, the content provider continues to spend the time (a resource) involved in generating content and receives no compensation for this labor and the ad company receives no income because no ads are viewed and clicked. In solving the problem of unwanted surveillance, the user has defunded all of the other parties involved and in the long run this will likely have consequences. For example, the infrastructure company could block the reveal of requested content to users blocking the accompanying ads. Content creators could look for other outlets for their content or at least reduce the amount or quality of the content they produce.

Are there solutions existing or new companies can apply to bring these parties into some fair balance? I think some serious efforts are starting to emerge.

First, there is the subscription model that has been successful with streamed music. Some of the same companies now focused on music are expanding their model to include podcasts. To access podcasts affiliated with a service, users will have to pay the price of subscription. In some cases, this could mean the subscription they pay for music would also include podcasts. Content creators would be paid a small amount for each time their podcasts were served. This model could be extended to other content types – e.g., Medium for written material. What seems to be happening at present is that the more popular creators are compensated and those who receive less attention can offer content but are not compensated.

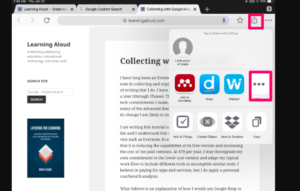

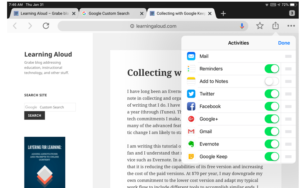

A second service I think has great potential is the Brave browser [https://brave.com/]. You can download this software for pretty much any device. The browser is really part of a service that involves three capabilities. First, the browser blocks cookies and scripts. This capability can be controlled by the user as a general approach (everything is blocked) or as a blocking capability turned on and off depending on the site. Second, the more general service associated with the browser allows users to commit an amount of money that is distributed to content providers/services in proportion to the amount of time allocated to the sites visited. Content creators must enroll (no cost) to be compensated in this manner. Each month, the sites visited are listed for the user and the user can drop for compensation any site from the list. Money is not forwarded to sites until they register. Finally, the service is just rolling out a potential compensation opportunity for users. This approach substitutes ads through the Brave browser service for ads normally accompanying the content when viewed with other browsers. So, users are paid to view ads, but the data normally collected via cookies and scripts are not allowed to pass. This final component of the Brave model is just being tested at this time. Brave takes part of the ad revenue when users offer compensation for content/service and when companies offer ads through Brave. These funds provide compensation for the infrastructure and company.

So, there are multiple opportunities here (as I understand the possible combinations). Users could use Brave to block ads. Users could contribute and block ads. Users could receive compensation for viewing ads and block ads. Users could blocks ads, contribute, and receive compensation for ads viewed via Brave.

Again, it is possible to consider how the user, content creator, and infrastructure provider are compensated when thee different combinations are implemented. If a user blocks ads and nothing else, the user receives content or uses a service at no cost, but the content creator or service provider is not compensated. If the user blocks ads and submits a voluntary contribution, the user receives access to the content or service and the provider is also compensated. If the user blocks ads, but accepts user ads and offers no compensation, the user benefits in multiple ways (content and revenue) and views ads and the providers receive some compensation. The difference in this final combination from the most common existing experience is that the user views ads, but does not provide personal information that can be shared.

I hope that I am understanding the Brave long-term view appropriately in claiming that the content creator potentially receives revenue from both contributors and from ads shown by Brave. It would be possible to compensate users, but not content creators when ads are displayed.

If Brave gets their approach off the ground and attracts sufficient users, I predict the model will change such that content contributors will make an exclusive commitment to have their content viewed through Brave and users will have to accept viewing ads for these sources unless they are contributors. This would guarantee a revenue source for users and content creators. Note – I am guessing at a long term model here. If this should happen, I would also predict that a similar model would then be adopted by other sites such as Facebook and Twitter. Google would be most harmed by this model because they would then be in competition with Brave to make money by selling and displaying ads.

In summary, I see alternatives to surveillance capitalism should these competitive models take hold. The present surveillance capitalism model is still much more popular, but public awareness of the collection and sharing of their information is encouraging ad/cookie blocking. Should ad and data collection blocking become common, the lack of opportunities for certain categories of content and service creators will eventually extinguish their willingness to work without compensation.

If capitalism doesn’t take on surveillance capitalism, government intervention seems very likely.

![]()

You must be logged in to post a comment.