From time to time, I take a look at a topic I was interested in, say 5 or so years ago, and ask what has happened since. Have classroom strategies that seemed to be enjoyable and productive survived and how have they matured? Here is an example of what I mean.

A decade ago, I was interested in the potential of technology for allowing greater individualization of instruction. My primary interest was in technology that allowed ideas from the 1970s-80s called mastery learning to become practical. Mastery learning proposed that group-based instruction largely ignored differences in aptitude and background knowledge, leading to frustration and learning challenges because the group advanced whether individuals were ready or not. To relate this to a widely recognized alternative based in technology consider the approach now allowed by the Kahn Academy.

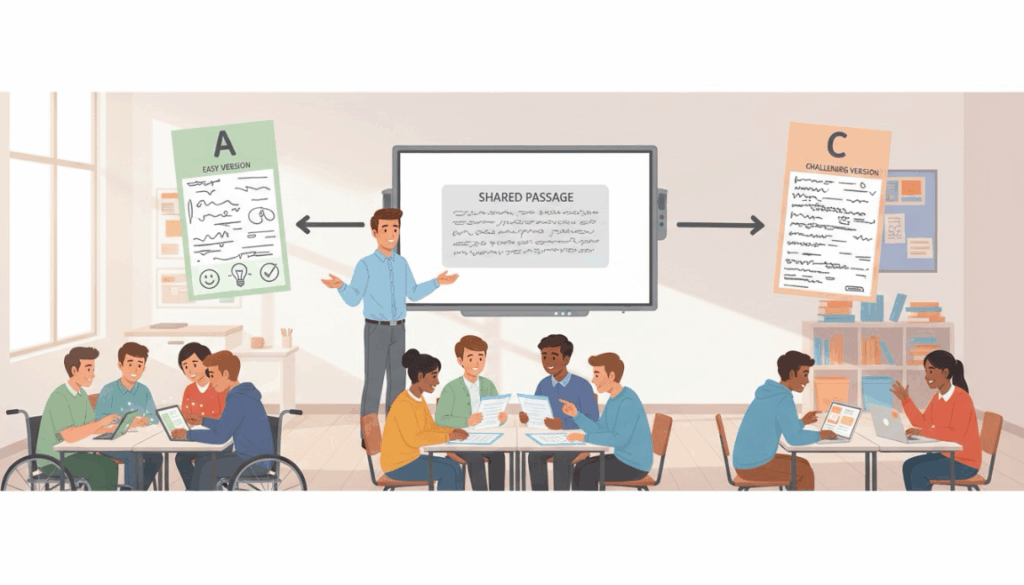

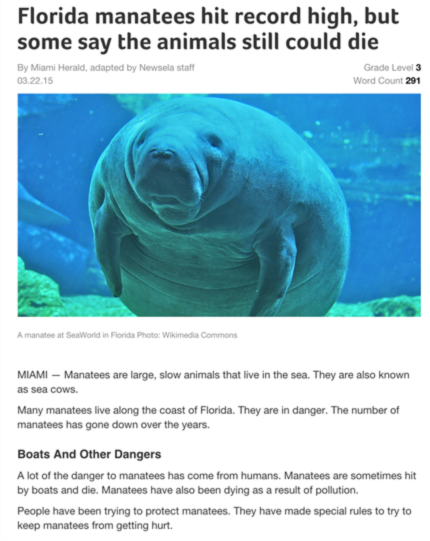

A different approach, maintaining more of a group-based strategy, was proposed by Newsela. This company argued that reading content (individual articles) could be presented at different reading levels allowing a class to read versions of the same material maintaining the opportunity for social opportunities such as class discussions. This approach made sense to me especially when applied to reading tasks that might be described as reading to learn – e.g., assignments in science, social studies, etc. The focus on informative content rather than fiction had obvious implications for present student learning and for the future. The following two images contrast the same content presented at different reading levels.

I wrote multiple posts describing Newsela and how it might be implemented. Others were offering similar observations.

Individualizing literacy instruction with Newsela (2015)

Layering Newsela (2017)

Not all good ideas work or are practical so I decided to follow up what is now a decade later and see how the company and the product seem to be doing.

Adoption Level

It is difficult to get accurate information about student use. Newsela currently reports that it is used by 3.3 million teachers and 40 million students, the exact total it reported in 2016. Newsela has a lite and pro level and the lite level has attracted a lot of attention and occasional use. Occasional is a guess as I could not find stats on the level of activity. For some classrooms and individuals reading an occasional story would be a productive activity. I am assuming that the combination of those using the lite and the paid levels accounts for the differences in usage statistics that are reported.

The paid version is better suited to using the tool as part of the curriculum. The startup’s paid product is between $6 to $14 per student. Newsela is sold at a rate of $6000 per school or $1000 per grade. Newsela estimates that gross bookings have grown 115% over the years of the pandemic, and that revenue grew 81%. More than 11 million students were using Newsela under a licensing agreement by the end of 2021.

The version of Newsela I described in my late 2010s posts has changed substantially. Newsela has significantly evolved in recent years to become more AI-driven, expanding both its suite of educational products and the ways users interact with its content and assessment tools. A secondary emphasis on writing has emerged. Usage trends reflect a shift toward greater integration of artificial intelligence and differentiated instruction, as well as changes in accessibility and assessment features for teachers and students.

Efficacy Studies

My tendency when advocating, or at least describing, an instructional strategy implemented through a commercially available tool or product is to search for published research that evaluates the approach I want to describe. The following are descriptions of two studies I located.

WestEd (2018) Newsela efficacy study: Building comprehension through leveled nonfiction content.

Classes of fifth-grade students from two districts were randomly assigned to a Newsela or a Control condition. Reading instruction in the Newsela classes was modified to include at least two Newsela articles per week – one in class and at least one at home. Students in the Control condition relied on their normal reading curriculum. The study ran for 14 weeks and used the difference in STAR pre and post-performance scores as the dependent variable. Student compliance with the Newsela homework expectation varied widely, with 55% meeting the one-per-week expectation. When those meeting the expected level of engagement were compared with the control group, their achievement gains were significantly greater.

Literacy gains from weekly Newsela ELA use

This year-long study made use of differences in pre and post-MAP reading assessments as the dependent variable. The classes of third and fourth grade educators participated as Newsela and control conditions. The Newsela classes were asked to read at least two stories and take one multiple-choice test per week. Teachers in the control condition relied on their own selection of reading material with the largest source being content they had found through Google sources. Fourth-grade students, but not third-grade students, achieved at a significantly higher level in the Newsela condition.

Why can’t I find peer-reviewed published studies

Often, I am frustrated when I cannot find studies that directly support the strategy I want to describe. This is the case with Newsela and I have been thinking about why this is the case.

Newsela has engaged outside agencies (e.g., WestEd) to conduct research using their products, but these studies are available as what I would describe as technical reports and don’t seem to appear in scholarly journals. After reading these reports I can see that if I had been asked to review the research for publication, I would also identify issues that would cause me to suggest that the study not be published. In the studies I will describe here, I see flaws in the research design that allow alternate explanations for the positive results.

Applied research is often very difficult because those implementing the research have their own issues and priorities. Sometimes a methodology does involve tight controls from the beginning and sometimes it seems that original design is allowed to slip as unanticipated issues come up.

For example, in the first study I describe, the plan was to have a control group and a Newsela group with one in-class and one homework reading assignment a week. It turned out that the homework assignment was ignored in many cases and to generate significant evidence that Newsela was productive the researchers compared those who did the homework against the control group. This may not be important, but it could also mean that the Newsela group now consists of more motivated readers than the control group, and this interest in reading, rather than the Newsela content and approach, was what created the difference in the development of reading skill. It is unclear to me from reading the description why expectations for completing the homework such as including completion as part of the grading scheme was not implemented. I can imagine a different controversy if what I propose was implemented as you would then extra reading required in the Newsela group and not in the control group. Perhaps the most ideal approach would be to maintain control of all of the reading assignments within the classroom setting so that the time allocated could be matched.

The second study I have described is limited by what I would describe as clear identification of what is the intended independent variable. What has always attracted my interest in Newsela was the group-based, but individualized approach the content allows. Each Newsela document is available at multiple level (5) of complexity. This allows those readers at different levels of aptitude and skill development to read a variant of the same content so that discussion and a social element of instruction can be maintained. My personal interest in technology-supported learning has always been based on the potential of individualization. One argument some make about many technology applications that allow for differences in rate of learning is that students are isolated and miss out on the social benefits of a classroom setting. Newsela offer an alternative approach that maintains the social setting.

This study creates a different or at least an added difference when comparing the Newsela group and the control group. The authors report that when teachers select the reading content for the control condition this material differs in category from the Newsela treatment. Teachers in the control condition were described as relying on Google searches to find content fitting with the topics that they covered and this content contained significantly less “nonfiction” content. A cleaner approach more consistent with what I think is the unique Newsela content would be to compare the impact of a single version of articles versus multiple versions of the same articles.

Summary

As a commercial venture Newsela seems to be doing well. It has a solid base of schools that have committed to purchasing the program. My criticism of the weak methodologies used in evaluation efforts is mostly a function of my interest in the impact of the individualization efforts the resources provide. Having current and nonfiction content is important, but the strategy on which the company originally made its name has not been rigorously evaluated.

It now seems educators could use any of several AI tools to create similar content. Prompts such as rewrite this content at a level appropriate to fifth grade students could be applied to any content a teacher could upload. Given this option, the value to a district would depend on the time savings to teachers and the constant access to new content would be the advantages Newsela provides.

![]()

You must be logged in to post a comment.