One thing I miss as a retired academic is going to the office daily and having the chance to share ideas on common interests. My background was in educational psychology and topics such as AI and learning would not only be relevant to me, but also to the people I had lunch with and passed in the halls. I would have been interested in my colleagues’ take on the pros and cons of AI in classroom settings. Were they concerned about cheating? Had they encountered students who cheated and how did they know for sure that their suspicions were justified? Had they modified the assignments they had always used or perhaps abandoned these tactics as untrustworthy?

I still find myself thinking about such topics and despite no longer having firsthand experience, I wonder what I would do should I still be working. When something is that important in your life and self-view, it doesn’t leave you, and I cannot help but continue to explore such topics and share my findings and opinions through outlets like this.

Beyond the internet, AI poses a tremendous challenge, with both its opportunities and its risks. Cheating obviously falls in the risks category. It challenges how accomplishments are evaluated and the results shared with learners and other interested parties (e.g., employers, those involved in competitive selection processes for limited-enrollment programs, the instructor in subsequent courses). It also poses a challenge to our efforts to craft assignments we are confident will contribute to student learning. If tasks are not completed as we assume, we cannot trust the markers we use to evaluate what students know, nor can we rely on them to guide our decisions about when to move on and what we can assume will make sense in new instruction.

Without my colleagues, I now must rely more on what I can read or find online to form my own opinions. This is a difficult and relatively recent problem and little I would regard as proven seems to be available. There is plenty of advice and personal perspectives and folks willing to offer books on the topic. I might as well offer my own perspective on a specific instructional situation, since, at present, ideas focused on specific tasks in a specific type of classroom are the only ones I don’t immediately find myself arguing with.

The Opposite of Cheating

I have been reading The opposite of cheating: Teaching for integrity in the age of AI (Gallant & Rettinger). It is well written and well referenced, but the type of source I find myself both rejecting and applauding when it comes to specific recommendations. Typically, a negative reaction stems from the impracticality of a suggestion given my own circumstances. I doubt it is reasonable I should expect authors to create a master model differentiating when a specific idea can be applied as that would add too much complexity and readers need to be active participants in finding what they should take from a resource. Anyway, this book made a point that sparked what follows.

Writing to Learn

Written products played a significant role in some of the courses I taught. I assumed the products were a) an incentive to read the sources I expected students to read and listen to presentations I and students made, b) a way to demonstrate understanding and depending on the assignment consider applications, c) a task that involved the student in thinking in ways that led to understanding and retention, and d) a way to evaluate students. Having students write in isolation is one of those common tasks that has come under suspicion because of AI.

Back to the “Opposite of cheating”. One of the authors’ general suggestions is to evaluate the process, not the product. I wrote a post some time ago making a very similar point. I think it helpful to explain why emphasizing what I would describe as subprocesses allows not only what might be described as surveillance, but also a superior instructional approach.

Similarity to the strategy of showing your work.

Yes, a requirement in what is probably math classes that you show your work was partially a check on whether a student had done the work, but just as important it was a record of the processing that was involved. A student and the teacher had access to the student’s externalized thinking. This visible record might be used by the student when the process breaks down and must be adjusted. It also provided someone else the opportunity to follow the student’s logic. The concept of externalized thinking has many applications for those who propose that cognitive research is useful to educational issues.

My long term interests in showing your work have focused more on writing and a specific application of writing often called writing to learn. Given a writing to learn task, assigned by a teacher or taken on as a personal strategy, a student could, of course, simply start writing or feed a prompt to their AI tool of choice. Here again, the “show your work” strategy can serve as both a check that you have done the work and a benefit to deeper thinking.

I have been influenced by the logic and justification of advocates of personal knowledge management and the second brain. These concepts, when considered carefully, are clearly process-oriented: engaging purposefully and thoughtfully in specific processes benefits the products they produce, and externalizing processes that could be performed internally enables them to be performed more skillfully. I have long been interested in the Writing Process Model (Flower & Hayes) and variations. These researchers sought to develop a model that identifies the processes of writing and how the processes interact to create a written product. One benefit they proposed for such a model was the identification of component skills, allowing more efficient development of proficiency in individual skills. Identification of processes could guide both the topics researchers pursue and the instructional practices relevant to the classroom.

Connection with the topic of mitigating cheating

Let me start with this claim: an externalization requirement can serve both the purpose of ensuring that a process has been executed by a person and the educational goal associated with the assigned task. I think this works well when the goal is writing to learn.

I already indicated that writing to learn (or learning to write) can be broken down into subprocesses. Rather than relying on the Writing Process Model, allow me to offer a simpler approach for this situation.

In order to complete a writing to learn assignment, a student must:

Read the content

Identified what she felt are important ideas in the content

Processed this collection of ideas to understand and apply

I assume this is acceptable as a gross level description. If an educator relies on only the product turned in, with AI the educator must guess whether any of these tasks had actually been performed by a given student.

Those of us who make use of Personal Knowledge Management tools engage in these processes even though we are not accountable to an educator responsible for our skill and knowledge development. We do these things because we believe they deepen our understanding and strengthen our ability to craft better products.

We integrate a variety of tools while we read that would allow someone else to agree that we have in fact read.

Most of these tools involve highlighting and annotation as part of the reading process. The highlights and notes serve as an external representation of what we regard as important ideas in the content.

We then extract highlights and annotations from the original context so we can store and manipulate these elements more effectively. Having these elements separated and independent allows their long-term access and allows further processing such as linking, tagging, and secondary elaborations to occur. We value this growing and ever-modifiable collection as what has become popular to describe as a second brain that can be searched and explored for new insights and the generation of products.

The tools we use are ever improving and the skills in using these tools are being constantly scrutinized in search of greater efficiency and effectiveness.

The tools are there and it is easy to find free options. There is long-term benefit in learning to use such tools as skills relevant to lifelong learning. Why not teach these techniques to students and use the potential side benefit of accountability?

Hypothes.is as a starting point – try it you might like it

I first used Hypothes.is because I was interested in social note-taking with students. Simply put, this perspective argues that there may be benefits to a system that allows students to share notes. What did others find interesting or valuable in an assigned reading, and what might comments they made in response to what they highlighted as important reveal that others may not have considered?

This same tool could be applied such that an individual’s highlights and notes be available just to the instructor rather than the entire class. This covers “was it read” and “were ideas I thought important identified?

The process for exporting from Hypothes.is works like this:

How to Export Annotations

Activate Hypothesis: Go to the webpage or document you’ve annotated and open the Hypothesis sidebar.

Open Sharing Menu: Click the “Share” button.

Select Export: Choose the “Export” tab.

Select Annotations: Use the dropdown to choose which user’s annotations to export (your own, a specific group, etc.).

Choose Format: Select your desired file type (e.g. HTML, plain text).

Export: Click the “Export” button to download the file, or “Copy to clipboard”.

A screenshot of Hypothes.is in use. Hypothes.is is a browser extension so the content must be something online or something you can open in a browser (e.g., pdf). The content window on the left is where the reader highlights, annotates, and reads. The highlights and notes appear in the column on the right.

Organize and Elaborate

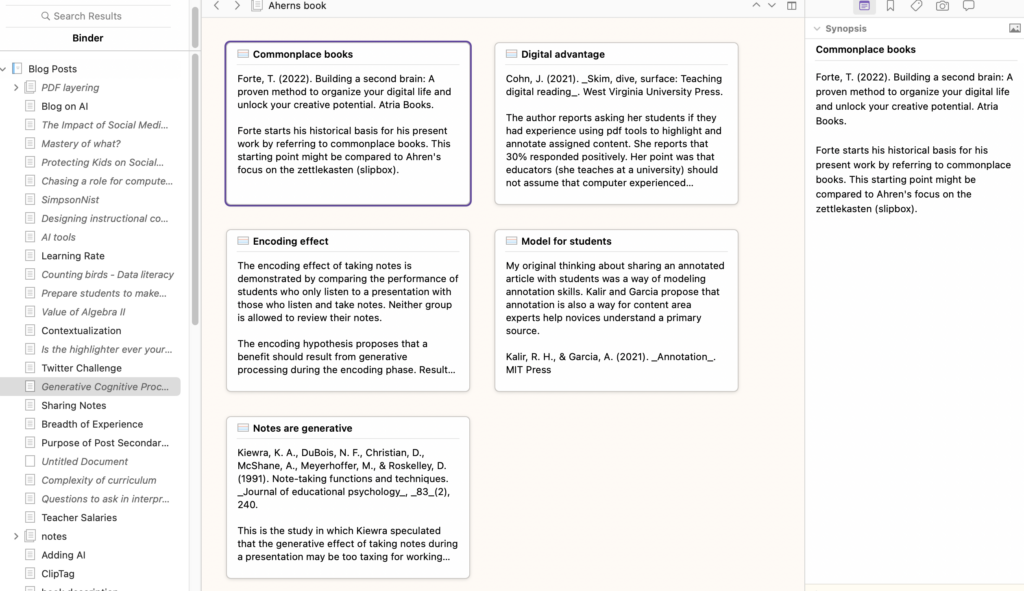

At this point, I would now bring individual elements into a tool such as Obsidian, which I would not hesitate to introduce to college students. This tool is designed to store a large collection of individual idea notes, tag them, create links among them, and extend individual notes by generating secondary notes (elaboration). I raise this tool as an opportunity, not because there are no other options. Perhaps this mention of this tool will raise the curiosity of those willing to go a little deeper.

There are other basic ways to do this. In the next stage before writing, you might open a document in any word processing tool and copy and paste individual notes or ideas from the notes or highlights into this document. As you proceed, you might cut and paste from this working document to better organize topics and integrate them into your final product. Even with the many personal knowledge management tools I use, I often take this simple approach when approaching the final stage of a project. I might cut and paste chunks of text and citations from the content I have accumulated into a common document. Often, this is not just about collecting ideas from a single source but bringing together ideas from multiple sources. I open this “collection” document in a separate word processing window and work from this narrowing of material into a draft of the product I am creating.

Some writing tools even offer visible ways to do this. For example, Scrivener provides notes as note cards that can be moved around in a space to explore organizational options. Even if you do not intend to use a tool like this, visualizing the approach may be helpful. The “corkboard” option in Scrivener is shown below. Here you can see how individual project-related notes have been moved to this corkboard. The notes can be dragged around to create an optimal structure.

Summary

This post focuses more on a concept for discouraging AI cheating more than on a detailed tutorial for using the tools involved. The core idea is that tasks can be assigned that are both beneficial for applying subskills to the writing-to-learn process and useful for documenting students’ completion of these subskills. I have identified specific tools and tactics, but there are likely alternatives for everything I have used as an example.

Source

Gallant, T. & Rettinger, D. (2025). The Opposite of Cheating: Teaching for Integrity in the Age of AI (Vol. 4). University of Oklahoma Press.

![]()

You must be logged in to post a comment.