I was excited when Google announced its investment in developing NotebookLM as a tool for learners. First, I encountered the description of its new program Learning Your Way, which promised to personalize textbooks in various ways, including explanations based on personal interests. Examples of what this would look like were provided, but to submit your own content for the “learn your way” treatment, you first had to indicate your interest and then wait to be selected. I am still waiting. However, Google has also announced enhancements for the existing NotebookLM that make several proven study strategies more straightforward to implement, as well as a couple of unique ways to repurpose instructional text as a podcast or something resembling a slide-based lecture. The updates available to all are what I will describe here.

Before anyone gets overly excited, what Google can presently make available requires that an instructor or a learner must be able to load content into NotebookLM. There is no legal way in which what I will describe can be done with your existing commercial paper or even digital textbook. Companies producing instructional materials may either collaborate with tech companies or develop similar capabilities, but they are not doing so at present. I wrote a couple of textbooks earlier in my career. When my collaboration with a textbook company ended, I was given complete control of the copyrights and now I have full-length chapters I can use for demonstrations. This is what I am doing here. NotebookLM notebooks can be shared and I will make the content on which this tutorial is based available for you to explore.

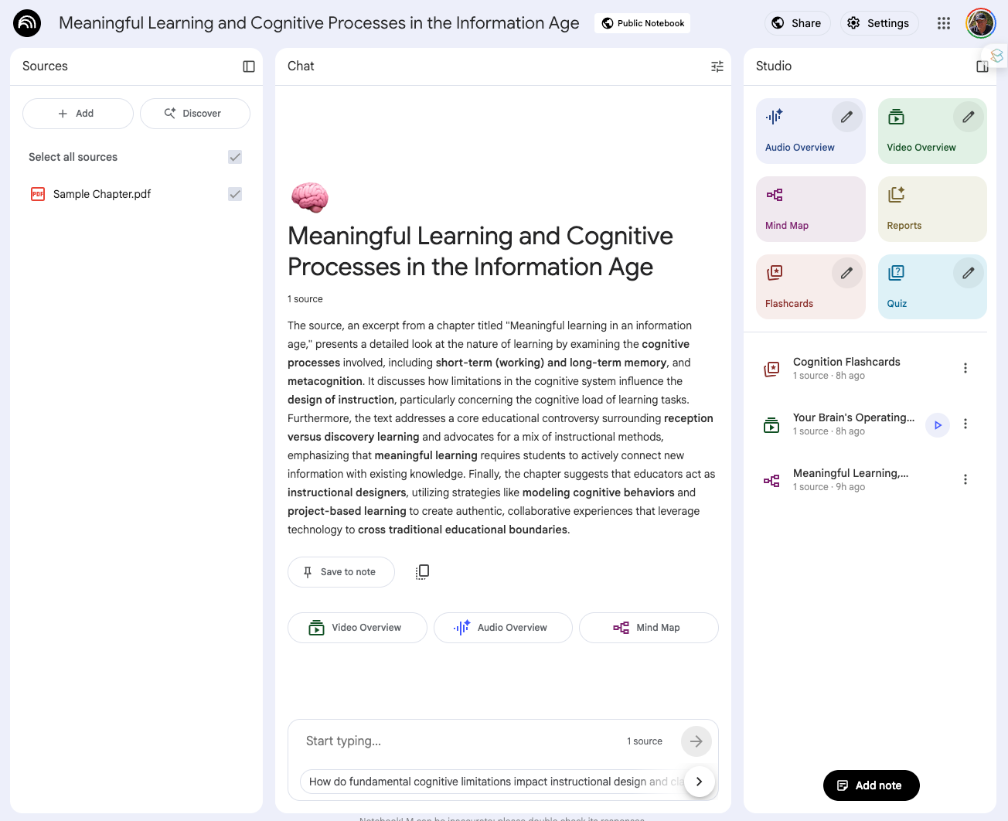

For those unfamiliar with the newest updates, NotebookLM presents as three vertical panels. The leftmost panel shows the content you have uploaded to NotebookLM as well as provides the opportunity to upload additional content and open individual files for viewing. The middle panel is where AI prompts are applied and the output from such prompts are displayed. The rightmost panel is where the older and new AI tools are activated and output from these tools can be accessed for later display.

As soon as NotebookLM is launched, the middle panel displays a summary of the source files that have been added. Loading source files does not include them in this summary unless they have been selected (see check box). NotebookLM was developed as an RAG (retrieval-augmented generation), which means the prompts are applied to the content you provide rather than applied to a massive corpus available to the model. The new “Discover” tool available from the top of the sources panel breaks with this approach and allows a user to request additional information intended to be related to the purpose for a particular notebook that can be reviewed and added as an additional source.

Before I continue, I want to identify one frustration I have with the way NotebookLM fits into my workflow. Developers have specific applications in mind when creating applications, and their vision may not include what an individual user prefers. My preference is to read, highlight, and annotate content before using an AI tool. While NotebookLM allows access to sources, it does not support highlighting or annotation, nor does it display such added layers from uploaded material. The workaround is to export personal additions and add this content to NotebookLM separately.

Mindmap

New capabilities appear in the right-most column. The audio overview (podcast) and Mindmap capabilities were already available and will not be covered here.

Quiz

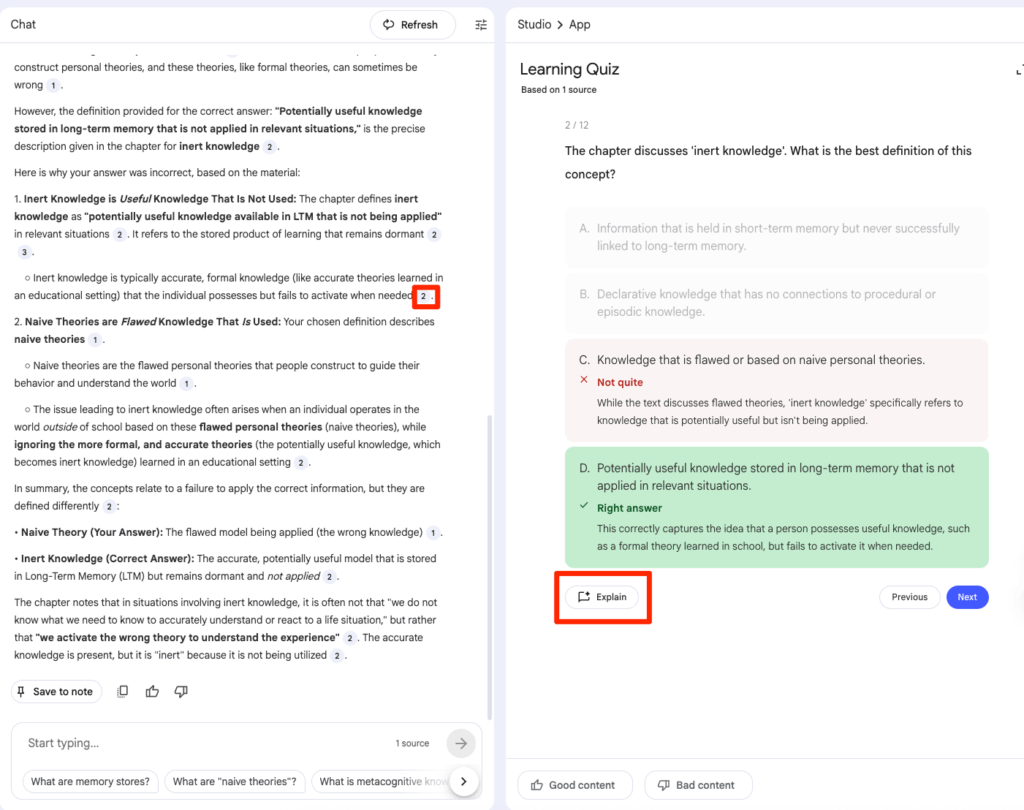

The new tool that first caught my attention was the quiz. I have explored various ways to have LLMs ask questions about designated content, but having a built-in tool for doing so is unique. The tool generates a double-digit set of multiple-choice questions that are then stored as an associated resource. I have no idea why the number of items varies. When selected, the MC questions are displayed one at a time (see the following image) and then, no matter which alternative is selected, a comment is provided. The “explain” button (see red box in the following image) shows the content from the text associated with that question. If you want more questions, the set generated can be deleted and a new set generated.

I think it is valuable when using this tool to be aware of your reaction and recognize you are working with an AI tool. As someone who had to generate multiple-choice questions for actual student exams, knowing what I know about student reactions, I would not use some of the AI-generated questions on an examination. I thought the stems of some AI questions were kind of vague or misleading, but the explanation clarified the logic justifying a given answer. If you understand this and are using the questions as a study experience, the ambiguity is probably fine. As a final comment to this post, I will explain why I prefer to ask an AI tool to generate open-ended (essay) questions on a specific topic and then wait to evaluate my response. Interacting with content in this way is more helpful.

Flash Card Tool

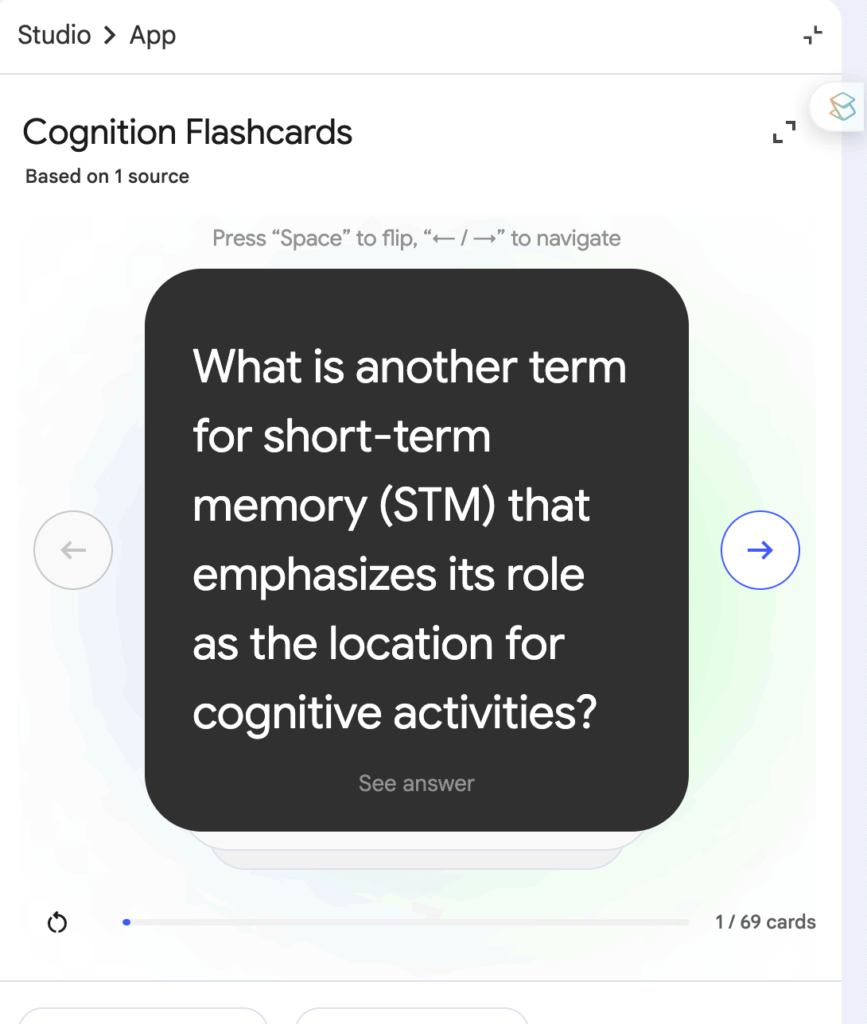

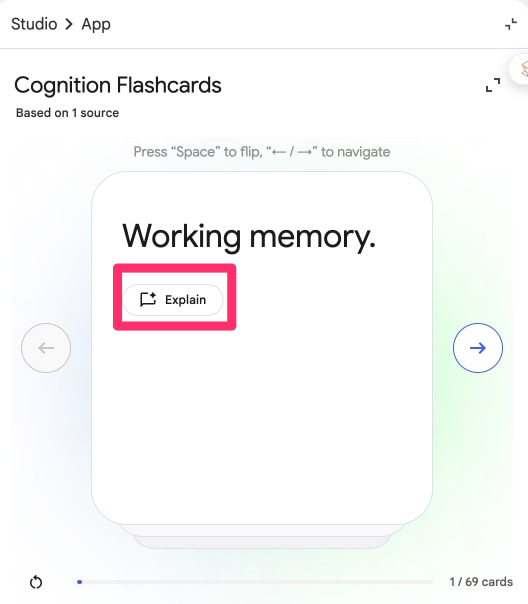

Flash cards are a useful tool for implementing memory enhancement strategies such as retrieval practice and interleaving. While relying completely on flashcards would not be wise, this approach is effective and useful for quick reviews spaced over time. Again, the “Explain” button (see the second of the following images) offers a connection to the original content and this provides potential opportunities to review beyond simply verifying that a response is correct. Recognize that NoteBookLM generated 69 cards for the targeted chapter, so this tool covers the content in detail.

Video Overview

The video overview offers what seems like an extension of the existing audio option. It strikes me as the equivalent of a lecture based on a series of slides. So imagine a large lecture environment in which you see the screen on which an instructor is projecting her PowerPoint and you are hearing the audio but not seeing the presenter. Having written this chapter, it is an interesting experience watching a lecture based on your words. It is possible to download the video so I decided to offer this download through YouTube if want to see an example.

Just Use Chat

NotebookLM, before these built-in tools, was a way to interact with uploaded content using AI chat prompts. I find this capability more powerful than the tools that seem to recreate standard study experiences. The familiar tools are probably a way to engage less experienced learners, but I would argue that concepts such as debating and tutoring offer opportunities that take advantage of the language capabilities of LLMs. The following is an example of a chat I had with the content.

[Prompt] Ask me a short-answer question about generative tasks and wait to evaluate my reply

According to the sources, what two specific categories of generative activities are highlighted for their value in improving understanding, retention, or transfer, and what general type of content processing is involved in these activities?

——————————————————————————–

I will now wait to evaluate your reply.

[Learner reply] I remember the chapter talked about responding to questions and I can’t remember another example from the book. How about writing to learn, but I don’t think that was in the chapter.

[AI response] That was a strong attempt, and your intuition about “writing to learn” was very close to one of the specified terms!

Here is the answer to the question, based on the specific terminology used in the sources:

The two specific categories of research emphasizing generative activities that improve understanding, retention, or transfer are labeled as:

1. “Authoring to learn”

2. “Teaching to learn”

These activities involve a “generative processing of content”.

Your suggestion of “writing to learn” is directly supported by the text, as “authoring to learn” is described as involving the preparation and implementation of writing tasks. This generative processing of content is suggested to be superior in many ways to studying only for personal use.

While responding to questions is certainly a critical activity discussed in the sources—often serving as an external strategy for evaluation and regulation that compensates for weak metacognition—it was not one of the two specific research categories given the label “generative activities” in this context.

[ Added comment] What is not visible in my copies of this text from this interaction are the inserted numbered buttons that provide access to sections of text from the original text.

Notebooks can be shared. You are invited to explore the Notebook that was the basis for this prompt.

![]()

2 thoughts on “NotebookLM Enhancements”