I found the Writing Process Model (Flower & Hayes, 1981; Hayes, 2012) helps me think about the development of writing skills and the specific application typically described as writing to learn. This model identifies the processes and the interaction among the processes involved in writing and has been used to guide both writing researchers and the development of instructional tactics.

The model provides researchers and instructional designers with a concrete framework to work with by identifying specific skills that can be studied as the source of individual differences in writing skills or targeted for development, assuming that greater proficiency with these skills will lead to more effective writing.

I first used this model to speculate how specific technology tools could support writers. For example, technology offers powerful ways to take and review notes, a method for planning by creating an outline or “mind map”, and a way to record text that allows for easy manipulation and revision. I have begun to think about the model in a different way brought on by easy access to AI for writing in general and in classrooms more specifically. Given the general goals of learning to write and writing to learn, when do specific uses of AI facilitate and when do these applications harm the development of the writing subprocesses as involved in each category of writing experience? Put another way, instead of thinking of AI as an all-or-nothing approach to creating written content, would it make more sense to evaluate the use of AI in impacting writing subprocesses and perhaps have students use AI more selectively?

The Writing Process Model

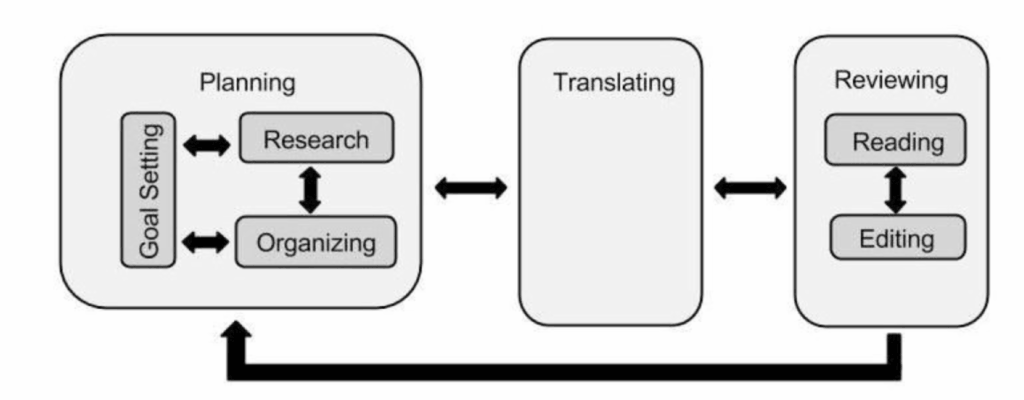

The model identifies three general components a) planning, b) translation, and c) reviewing (see the following illustration). Planning involves setting a goal for the project, gathering information related to this goal, which we refer to as research, and organizing this information so that the product generated makes sense. The goal may be self-determined or the result of an assignment. Research may involve remembering what the author knows about a topic or acquiring new information. Research should also include identifying the characteristics of the audience. What do they already know? How should I explain things so that they will understand? Finally, the process of organization involves establishing a sequence of ideas in memory or externally to represent the intended flow of logic or ideas.

What many of us think of as writing is what Flower and Hayes describe as translation. Translation is the process of getting our ideas from the mind to the screen and this externalization process is typically expected to conform to conventions of expression such as spelling and grammar.

Finally, authors read what they have written and make adjustments. This review may occur at the end of a project or at the end of a sentence. Authors may also solicit advice from others rather than relying solely on their own review.

One additional aspect of the model that should not be overlooked is its iterative nature. This is illustrated in the figure, which presents the model using arrows. We may be tempted, even after an initial examination of this model, to view writing as a mostly linear process – we think a bit and jot down a few ideas, we use these ideas to craft a draft, and we edit this draft to address grammatical issues. However, the path to a quality finished product is often more circuitous. We do more than make adjustments in spelling and grammar. As we translate our initial ideas, we may discover that we are vague on a point we thought we understood and need to conduct further research. We may decide that a different organizational scheme makes more sense. This reality interpreted using our tool metaphor would suggest that within a given project we seldom can be certain we have finished the use of a given tool and the opportunity to move back and forth among tools is quite valuable.

This model describes the processes identified by Flower and Hayes, but ignores two other components. The first is the writing task, which consists of the assignment and any writing completed at a given moment. The other missing element is the long-term memory of the writer. The long term memory or existing knowledge provides a source of information and strategies that the writer can use without resorting to new research.

Hayes (2012) updated this model to incorporate additional research and comments from colleagues and his own laboratory. Later work placed a strong emphasis on both revising the planning component and subsequent drafts, as well as on the role of working memory. Working memory recognizes that cognitive capacity is limited, meaning activities must fit within the existing capacity or perhaps be ignored. Processes become less demanding less capacity as a function of practice and aptitude and among other topics, is vital in understanding changes that could occur for learners across the grade levels. For example, Hayes noted that keyboarding is more difficult for younger learners than handwriting and products produced on a computer would thus appear of lower quality. Differences in cognitive demands do change with experience with keyboarding, eventually becoming less demanding than handwriting. Other factors can also impact cognitive demands and in one example, Hayes noted that studies requiring adults to write in all caps reduced the quality of the final product because transcription in this form is less practiced. As an aside, I could not help recognizing the tendency of some to write in all caps on social media and my perception of the quality of what is produced in that format. Anyway. Finally, Hayes recognized that writing was a motivated activity and differences in motivation could emphasize the processes.

One of the significant patterns in writing proficiency Hayes and others (Bereiter & Scardamalia, 1987) associated with the limited capacity of working memory and the gradual development of proficiency in the cognitive writing subskills, was the tendency to move from what Hayes and others describe as knowledge telling to knowledge transforming. The first approach results in a dump of ideas from long term memory triggered by the assignment and information that has already been recalled. In other words, a product can be generated without changing what is stored in memory or how this content is organized. Most instructors have a feeling for how this works. They have asked students to reply to an essay question and received at least some responses that seem to be everything students knew about the topic rather than a specific answer to the question. It can be hard to know if the student thinks they have answered the question or if this is just a frequently productive ploy. It is the first situation that shares characteristics with the idea of knowledge telling.

In contrast, writing classified as knowledge transforming requires that previously stored information be reorganized, reinterpreted, or extended based on speculation or insights. The writing task sometimes determines the difference, but transforming is more demanding and, when expected, is more likely to be produced by more capable writers. Writing to learn could involve either output, but the greater manipulation of ideas in knowledge transformation reflects the most significant benefit.

Klein (1999) offers a review organized around the writing processes hypothesized to be responsible for learning. A brief summary of some insights from this paper provides examples of tasks and skills that differentiate knowledge telling from knowledge transforming, also relating the distinction to the processes involved.

- Point of utterance (no revision and limited planning). This explanation assumes that learning occurs in the attempt to generate comments on the topic (no revision or planning is expected). This category might be described as spontaneous writing – the learner is asked or personally commits to writing on a topic. An activity fitting within this category would be the five minute writing tasks some college lecturers assign at the end of a class.

- Genre-related – This explanation focuses on the benefits of transforming ideas to the structure demanded of a specific genre. For example, in a “position paper,” a writer is expected to take on of several possible positions are generate an argument supporting this selection. In selecting, organizing, and connecting knowledge to fit the demands of a formal writing task, the learner creates an understanding that would not exist without the imposed task.

- Backward search – This explanation assumes that skilled writers formulate complex goals (characteristics of the desired product, audience needs, etc.) and then rework existing knowledge in terms of these goals. This seems a more generalized version of the “genre-related” explanation, requiring more sophisticated and complex problem-solving.

My own writing is generally linear with backtracking depending my assessment of how well things are going. This impression is based on my own behavior and may not accurately reflect the ideal recursive approach. When I take on a project, I assess what I already know and have notes on a topic and then read and take notes on additional material. I generate a rough idea of how these ideas could be organized (some would create a formal outline) and begin developing a draft. Often, I realize I need to fill a hole in my mental outline or find a reference in support of what I am trying to accomplish and have to take a closer look at my large collection of digital notes or read and take notes on another source or two. Upon completion of this initial draft, I reread what I have down to take care of lower level deficiencies (spelling, grammar) and often to add a little more material here or there so the document makes more sense or hangs together better. When writing for myself and without an external reviewer, I tend not to make major structural revisions. However, when I submit a paper for review, I do sometimes have to make larger adjustments even if the content is deemed useful.

Where does AI play a role? Where should AI play a role? I have worked through my thoughts on these questions based on my own circumstances and also on what should be the circumstances for those in more formal learning settings. I want to produce content that meets a reasonable standard of quality, but I am not that interested in becoming a more accomplished writer. I want what I write to be a reflection of my experiences and what I have learned, and I assume I can learn from the process of writing. I am willing to invest time in this objective. I propose that others generate a similar analysis for themselves based on personal goals or goals for others they may be responsible for educating.

How do I use AI? I primarily use AI to facilitate the research and planning components of my writing process and to perform some of the revision tasks. These emphases are consistent with my desire to learn from writing and my lesser interest in improving my writing skills. I am not suggesting everyone apply my priorities, I am suggesting it is possible to identify priorities and use AI strategically and efficiently.

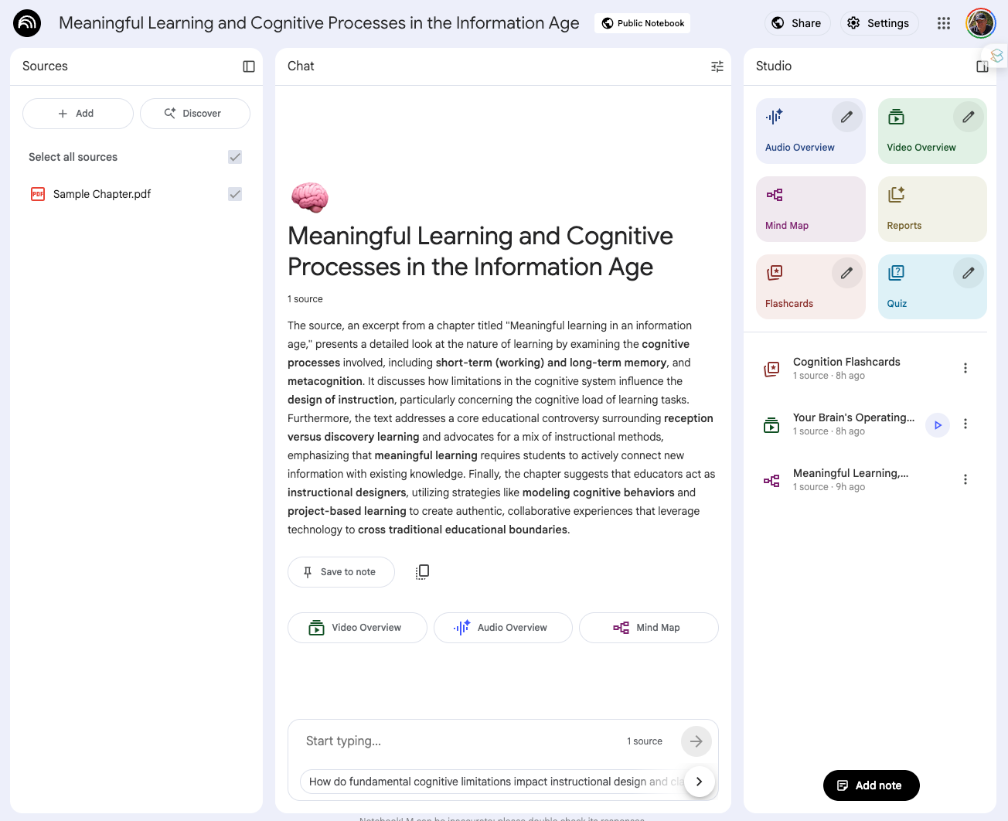

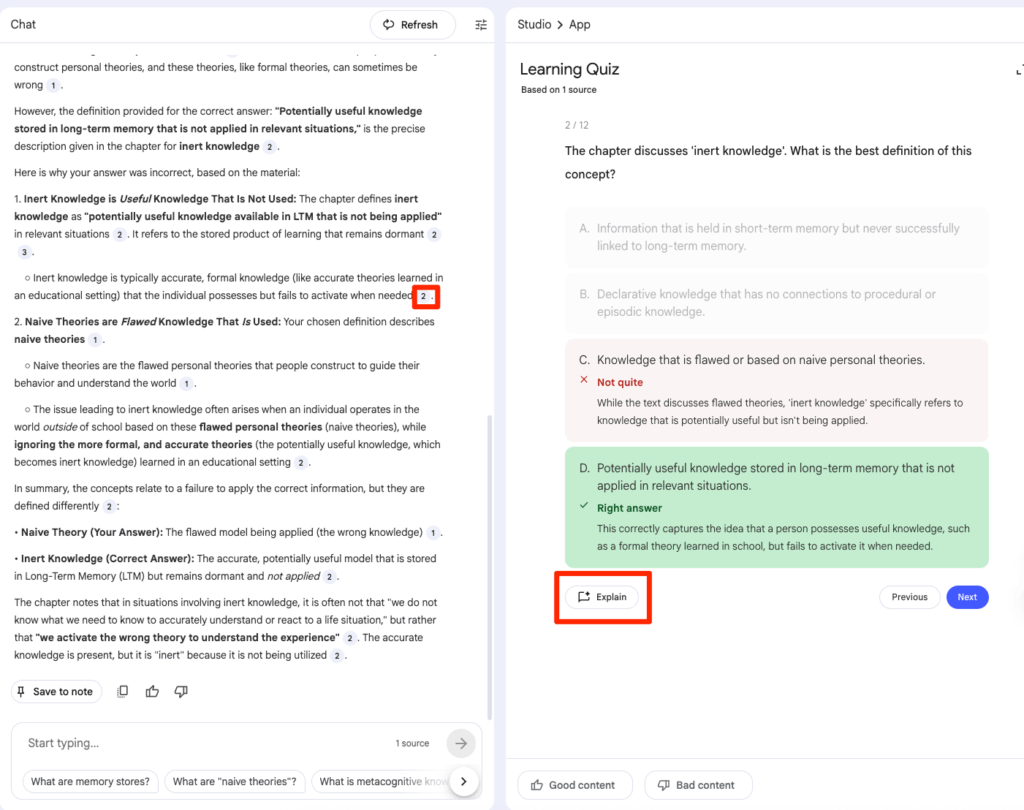

Here is how this works. I have developed a large collection of notes consistent with methods of personal knowledge management and a technology-enabled second brain. This is a multidecade-long process that involves reading widely in the educational technology and educational psychology literature. When I get an idea for a writing project, I use AI to query this body of content for ideas related to my intended project. Based on the content I produce and queries for related information (some generated by AI), I review this new content and supplement my notes on the topic. I then often use AI (typically NotebookLM) to provide a structure for the intended project based on my collection of notes. I consider this proposed approach when generating my initial draft.

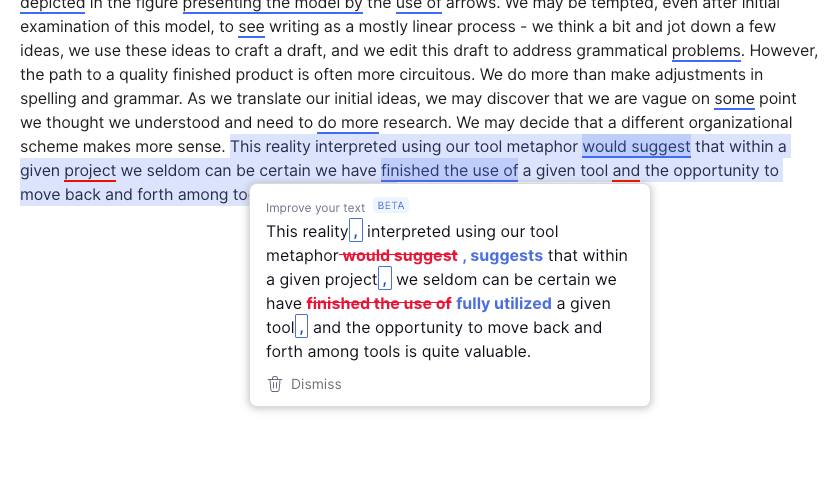

I use Grammarly heavily when I write. I use the pro version so I get constant feedback not only on spelling and basic grammar, but also more substantive recommended changes at the paragraph level. Grammarly identifies issues I should consider and offers suggestions. I suppose this could be a valuable learning opportunity, but I admit I just select the recommend changes that sound good. Grammarly has just pushed a major update that offers even more capabilities, but I have yet to explore which might be useful to me.

The use of AI for research, planning, and text revisions do not limit my writing to learn. I think it is quite reasonable to associate AI capabilities with specific components of the writing process model, as applied to writing and writing to learn, in order to accomplish tasks that are not essential to developing writing skills or learning opportunities. The stickier problem presents itself when you must consider how to control the use of AI.

Sources

Bereiter.C. & Scardamalia, M. (1987). Two models of composing processes (pp. 1-30). In C. Bereiter & M. Scardamalia (Eds) The psychology of written Composition. Erlbaum.

Flower, L., & Hayes, J. R. (1981). A cognitive process theory of writing. College composition and communication, 32(4), 365-387.

Hayes, J. R. (2012). Modeling and Remodeling Writing. Written Communication, 29(3), 369-388. https://doi.org/10.1177/0741088312451260

Klein, P. D. (1999). Reopening inquiry into cognitive processes in writing-to-learn. Educational Psychology Review, 11, 203-270.

![]()

You must be logged in to post a comment.